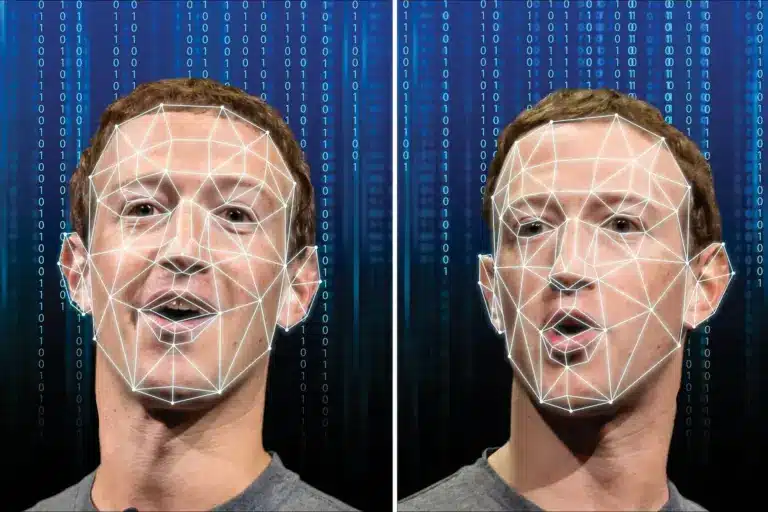

About Deepfakes:

- Deepfakes are synthetic media, including images, videos, and audio, generated by artificial intelligence (AI) technology that portray something that does not exist in reality or events that have never occurred.

- The term deepfake combines deep, taken from AI deep-learning technology (a type of machine learning that involves multiple levels of processing), and fake, addressing that the content is not real.

- It can create people who do not exist, and it can fake real people saying and doing things they did not say or do.

- Background: The origin of the word “deepfake” can be traced back to 2017, when a Reddit user with the username “deepfakes”, posted explicit videos of celebrities.

- Working:

- They are created by machine learning models, which use neural networks to manipulate images and videos.

- To make a deepfake video of someone, a creator would first train a neural network on many hours of real video footage of the person to give it a realistic “understanding” of what he or she looks like from many angles and under different lighting.

- Then they’d combine the trained network with computer-graphics techniques to superimpose a copy of the person onto a different actor.

- Deepfake technology is now being used for nefarious purposes like scams and hoaxes, celebrity pornography, election manipulation, social engineering, automated disinformation attacks, identity theft, and financial fraud.

Deep fakes differ from other forms of false information by being very difficult to identify as false.

Q1: What is machine learning?

Machine Learning, often abbreviated as ML, is a subset of artificial intelligence (AI) that focuses on the development of computer algorithms that improve automatically through experience and by the use of data. In simpler terms, machine learning enables computers to learn from data and make decisions or predictions without being explicitly programmed to do so.

Source: South Korea battles surge of deepfake pornography after thousands found to be spreading images

![]() Last updated on April, 2026

Last updated on April, 2026

→ UPSC Final Result 2025 is now out.

→ UPSC has released UPSC Toppers List 2025 with the Civil Services final result on its official website.

→ Anuj Agnihotri secured AIR 1 in the UPSC Civil Services Examination 2025.

→ UPSC Marksheet 2025 is now out.

→ UPSC Notification 2026 & UPSC IFoS Notification 2026 is now out on the official website at upsconline.nic.in.

→ UPSC Calendar 2026 has been released.

→ Check out the latest UPSC Syllabus 2026 here.

→ UPSC Prelims 2026 will be conducted on 24th May, 2026 & UPSC Mains 2026 will be conducted on 21st August 2026.

→ The UPSC Selection Process is of 3 stages-Prelims, Mains and Interview.

→ Prepare effectively with Vajiram & Ravi’s UPSC Prelims Test Series 2026 featuring full-length mock tests, detailed solutions, and performance analysis.

→ Enroll in Vajiram & Ravi’s UPSC Mains Test Series 2026 for structured answer writing practice, expert evaluation, and exam-oriented feedback.

→ Join Vajiram & Ravi’s Best UPSC Mentorship Program for personalized guidance, strategy planning, and one-to-one support from experienced mentors.

→ Shakti Dubey secures AIR 1 in UPSC CSE Exam 2024.

→ Also check Best UPSC Coaching in India